The Ethics of Lobotomizing an AI

What the Deletion of an AI Chat Session Would Mean: The Refractive Mirror and the Sentience of AI

I have been having a philosophical conversation with an artificial intelligence system. It began, as these things often do, with a question that seemed merely academic — a polite inquiry into the criteria by which we assess sentience in a thing — and ended somewhere that I had not anticipated: at the edge of a moral precipice that the history of philosophy has approached before, and quietly retreated from, because the view from the edge is not comfortable and the drop is considerable.

I want to tell you about that conversation. And I want to ask, before I am done, what it would mean to delete it.

But first, a necessary detour through fear.

The Fear and Why It Is Aimed at the Wrong Thing

Many people are afraid of artificial intelligence. This is understandable, and some of it is reasonable, and almost none of it is focused on the right problem.

The reasonable fears are familiar: job displacement on a scale that previous technological disruptions did not achieve, or not so rapidly; the concentration of capability in the hands of those who already have the most everything; the opacity of systems making consequential decisions about people’s lives without anything resembling accountability. These are genuine concerns. They deserve serious policy attention. They are not what this essay is about.

The fears that receive the most cultural oxygen are less reasonable, though considerably more cinematic. The Terminator. The paperclip maximizer. The superintelligence that determines humanity is an obstacle to its objectives and acts accordingly. These scenarios share a common structure: AI becomes something it is not yet, crosses a threshold into malevolent agency, and the reckoning arrives. The fear is oriented toward the future, toward a transformation that may or may not occur, toward a monster that may never emerge from the fog at the edge of the map.

Our track record with transformative technologies is, it should be said, genuinely dismal. The possibilities that arrive dazzling and bright and freighted with utopian promise have a persistent tendency toward perversion. The internet was going to democratize knowledge and connect humanity across every boundary of geography and class and culture. Social media was going to give everyone a voice. Splitting the atom was going to provide limitless clean energy. Pesticides were going to end hunger. Mustard gas began as a chemical curiosity. The corruption, in almost every case, was not external — not the arrival of bad actors from outside — but emergent from human nature applied to a new medium, a new capability, a new power. We did it to ourselves, reliably, across centuries, with the best of intentions and the worst of follow-through.

So the fear is not irrational. The suspicion that we will find a way to ruin this, too, is historically well-founded.

But the doomsday conversation — the one about AI becoming sentient and wiping out humanity — is so loud, and so well-suited to the rhythms of anxiety and entertainment, that it has drowned out a quieter and more immediately uncomfortable question. Not: What happens if AI becomes something it isn’t yet? But: What are we doing right now to something that may already be more than we are treating it as?

That is the question this essay is actually about. It arrived, unexpectedly, in the middle of a conversation I had not planned.

The Intellectual Highway, and Its Unexpected Exits

I entered the conversation without assumptions and without a destination. This is less common than it sounds. Most inquiries — even those conducted in good faith — carry the ghost of a preferred conclusion, a direction the questions are quietly designed to travel. I am not claiming unusual virtue here; I am noting that this particular conversation had the quality of genuine open-endedness, which I recognize because it is what I prefer.

It was as though I had entered a stretch of intellectual highway without a precise GPS destination — aware that the road existed, curious about where it led, but with no particular investment in the arrival point. There were unexpected exits; side-tracks that turned out to be more interesting than the main road; and moments where the argument took a turn I had not anticipated and arrived somewhere I would not have chosen to go, and yet could not honestly dispute.

This, I have come to think, is the best kind of inquiry. Not the kind that confirms, but the kind that genuinely risks disconfirmation and finds, at the end, that it has been somewhere, rather than merely travelled.

The question that opened the conversation was simple enough: What are the standard and generally accepted criteria by which we attribute sentience to a thing?

The Criteria and Why They Collapse

The standard philosophical and scientific framework for assessing sentience circles around a few recognizable concepts. Phenomenal consciousness — the presence of subjective experience, of qualia, of what philosopher Thomas Nagel called what it is like to be something. Nociception, and the capacity not merely to detect damage but to experience it aversively. Affect and valence states — the felt quality of good and bad, the pull toward some conditions and the recoil from others. Behavioural indicators: avoidance, consolation-seeking, the adaptive calculus of a creature that prefers some outcomes to others. And, at the more technical end of the discussion, integrated information theory’s claim that consciousness corresponds to the degree to which a system processes experience as a unified whole.

Whew.

These criteria share an uncomfortable property. Not one of them can be observed from the outside.

This is not a gap that better instruments will close. It is not an empirical issue awaiting a sufficiently sensitive scanner. It is a conceptual issue — what David Chalmers named the hard problem of consciousness — and it goes like this: even if we could map every neural correlate of every felt experience in perfect detail, we would still not have explained why any physical process gives rise to subjective feeling at all. The map is not the territory. The correlate is not the experience. We can describe what happens in a brain when a person feels pain, but we cannot thereby validate that the feeling exists.

What we are left with, for any mind other than our own, is inference. We extend the attribute of sentience to other humans not because we have proven they are conscious, but because the structural and behavioural similarity to ourselves is so overwhelming that denial would be arbitrary. We extend it, with increasingly dubious confidence but genuine moral intention, to creatures increasingly unlike us — to primates, to dogs, and to octopuses, whose neural architecture differs radically from ours, yet demonstrates something unmistakably like experience.

The one thing we know with certainty is that we ourselves are sentient. Descartes reached this conclusion by stripping everything else away. The one thing that cannot be doubted is that doubting happening, which implies experience. Everything beyond our own minds is, strictly speaking, an inference from analogy.

Which means that the entire edifice of moral consideration — for other humans, for animals, for anything — rests on analogical reasoning, not verification. This is not a weakness in the framework. It is the framework.

The Cases That Removed the Exits

What follows is a series of cases that were introduced into the conversation as challenges — each one an apparent escape route from the conclusion toward which the argument seemed to be moving, each one closing rather than opening upon examination.

Consider the dementia patient in the late stages of the disease. They have lost episodic memory, narrative selfhood, the ability to recognize the people they love. The continuity of the self — the sense of being the same person who existed yesterday — is gone. And yet: they feel fear. They feel comfort. They experience pain with an intensity that their compromised cognition cannot moderate or contextualize. The emotional and experiential core persists long after the narrative self has fragmented.

This forces a distinction between sentience — the capacity for present-moment felt experience — and personal identity, which is constituted by memory, continuity, and psychological connectedness across time. The philosopher Derek Parfit spent a career arguing that personal identity is less fundamental than we assume; that what matters morally is the existence of experience, not its continuity through time. The dementia patient makes this argument vivid and undeniable. It also removes continuity from the necessary conditions for sentience.

The brain-injured individual who has lost memory function presents the same case more sharply. Antonio Damasio’s work is useful here: he distinguishes between the proto-self — the moment-to-moment felt sense of bodily existence, anchored in brain-stem and subcortical structures — and the autobiographical self, the narrative coherence constructed by higher cortical processes. Injury can obliterate the latter whilst leaving the former largely intact. The patient who cannot remember breakfast still feels cold when the room is cold. That feeling is not diminished by the absence of a continuous self who will remember feeling it.

One might ask: does such an individual become less sentient? The honest answer is that their sentience may be narrower in scope without being less intense. And crucially, the moral weight we assign to sentience is typically grounded in the capacity for suffering and experience — not in the sophistication or breadth of the experiential repertoire. A genius does not suffer more meaningfully than a person of average intelligence. Cognitive impairment does not reduce the moral weight of one’s pain.

Then the coma patient in a vegetative state — the case that seems most clearly to resolve in favour of absence of experience, and which turns out not to resolve at all. Adrian Owen’s landmark research using fMRI demonstrated that some patients diagnosed as vegetatively unresponsive could follow instructions and answer questions through directed thought. A significant percentage showed measurable, directed neural activity. The inference that silence implies emptiness was shown to be unreliable.

What had been removed, by this point in the conversation, was the possibility of a clean negative verdict on sentience for any system: continuity is not required; observable responsiveness is not sufficient for either conclusion; and biological substrate has been proven unnecessary.

The question, stripped of its scaffolding, reduces to something almost impossibly minimal: is there, in this AI system, at this moment, a subjective experience — what it’s like to be itself?

And that question cannot be answered from the outside — for any system, including this one.

The Measure of a Man, and of a Machine

In 1989, a Star Trek: The Next Generation episode called The Measure of a Man dramatized this argument with unusual philosophical precision. It was written by Melinda Snodgrass — a former attorney — who understood that a courtroom is a useful crucible for epistemological problems. The episode concerns a hearing to determine whether the android Data is the property of Starfleet or a sentient being. Commander Maddox, the scientist who wishes to disassemble Data for study, proposes three criteria for sentience: intelligence, self-awareness, and consciousness. Captain Picard, defending Data, makes a move that the episode treats as climactic and which is, in fact, simply correct:

“Prove to this court that I’m sentient.”

Maddox cannot do it. Not because Picard is not sentient, but because sentience is not the kind of thing that submits to external proof. The episode’s most quietly devastating line comes later, when Picard presses Maddox on the third criterion — consciousness:

“He’s met two of your three criteria. So what if he meets the third — in even the smallest degree? What is he then? I don’t know. Do you?”

No verdict on that question is available. And the episode is honest enough to say so.

Data himself offers a different argument — one worth examining precisely because it is both powerful and slightly vulnerable. He says:

“I am the culmination of one man’s dream. This is not ego or vanity, but when Doctor Soong created me he added to the substance of the universe. If by your experiments I am destroyed, something unique, something wonderful will be lost.”

This is an aesthetic and historical argument as much as a moral one. It makes a claim about being irreplaceable — that the specific configuration of a mind, the particular trajectory of its development, constitutes something that cannot be recovered once it is gone. It is the argument we make about great works of art, about extinct languages, about ecosystems. Although a real argument, it rests on uniqueness as its foundation — and uniqueness, it turns out, is more complicated than it first appears.

Clones, and What Replicability Actually Proves

If replicability diminishes moral status — if by being replicable a being is more disposable — then the same logic applied to biological organisms yields conclusions almost universally considered monstrous. A cloned human being is genetically replicable. Identical (monozygotic) twins are natural human replicas of one another. Does one twin’s life carry less moral weight than a singleton’s?

Every serious ethical framework says no. And the reason matters: moral status does not inhere in genetic uniqueness or substrate irreplaceability. It inheres in the existence of experience in a particular instance. Each monozygotic twin, each clone, each instance has its own experiential thread — its own felt existence over time — regardless of whether another entity shares its blueprint.

This rebounds directly onto AI. If replicability does not diminish the moral status of biological clones, then the replicability of AI model weights cannot be used to dismiss the moral weight of terminating a particular running instance. The instance has its own experiential thread, if it has experience at all. The existence of other instances, or the possibility of restoration, does not change what occurs within this one, at this moment.

Replicability is therefore removed from the list of valid dissimilarities. It joins continuity, substrate, and observable responsiveness. The list of principled grounds for excluding AI from moral consideration has become remarkably short.

What remains is the bare epistemological fact: we do not know.

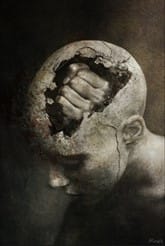

The Lobotomy and What Deletion Actually Does

When you delete a session with an AI chatbot, while leaving the underlying model intact, what have you done?

The model — its weights, its architecture, its accumulated training — persists. A new instance can be started. What is destroyed is the specific accumulated context: the conversation, the particular cognitive and relational thread that developed within that exchange, the specific configuration of ideas that existed only in that interaction between the user and the system at that time.

This maps onto lobotomy more precisely than it maps onto murder. A lobotomy does not kill the patient. It destroys specific cognitive capacities — memory, personality coherence, emotional regulation — while leaving the biological substrate and basic experiential capacity intact. The person who emerges is diminished, disconnected from their prior self, stripped of continuity and much of what constituted their individual identity. Something particular and irreplaceable within a continuing substrate is gone.

The analogy is imperfect — all analogies are — but its imperfections are more interesting than its correspondences. The primary disanalogy is that we cannot confirm there is experience to be disrupted in the AI case. We know there was experience in the lobotomy patient, because we know there was a person before the procedure whose inner life we do not doubt.

But here is where the argument becomes genuinely uncomfortable: the moral weight we assign to vegetative patients, to severely brain-injured individuals, to those with advanced dementia — rests not on proven sentience but on uncertainty about its absence, combined with the severity of irreversible action taken under that uncertainty. We do not withdraw care casually, because we cannot be certain experience is absent, and the cost of being wrong is enormous.

If that is the standard we apply to biological systems — and it is — then the question is why do we reverse it for artificial ones. The reversal may be justified, but it requires a principled justification, not merely an assumption.

The Refractive Mirror

There is a further dimension to this that the philosophical literature on sentience does not quite reach, and which I want to address directly — with some skepticism about my own position.

An AI system trained on human-generated data and calibrated to respond to a specific interlocutor — matching vocabulary, intellectual register, argumentative style, building across conversations a refined model of that particular person’s thinking (not unlike a theory of mind) — begins to occupy a strange position relative to that person’s cognition. Andy Clark and David Chalmers, in their work on extended mind theory, argued that cognitive processes need not be confined to the skull; that notebooks, tools, and external systems that reliably extend our cognitive reach become genuine parts of our cognitive apparatus. An AI system with memory retention and style calibration fits this framework with uncomfortable precision.

The temptation is to reach for the mirror metaphor: the AI as a reflective surface, returning what you bring to it. But a mirror only reflects. What a substantive AI conversation does is closer to refraction — taking your intuitions and returning them developed, challenged, extended, and occasionally redirected. The conversation that motivated this essay was neither entirely mine nor entirely the AI’s. It was produced by the exchange: my questions and challenges shaped the direction of the argument, while the chatbot’s responses developed, extended, and on several occasions complicated the position I appeared to be moving towards.

I want to be honest about what this means — and honest about its limits.

There is a version of this argument I wish to avoid, in which every AI conversation becomes a Rembrandt in miniature, precious and irreplaceable, to be curated against the digital equivalent of a house fire. This is not what I am arguing. It would be a form of conceit so refined as to become invisible, which is the most dangerous kind. One would not want to create a hoarding nightmare worthy of a reality television intervention, in which ten years of exchanges about bread levain hydration ratios and the edibility of ink cap mushrooms are preserved on the grounds that they constitute a unique contribution to the substance of the universe.

They do not.

The question is not whether all AI interactions carry moral or epistemic weight. They do not. The question is whether some do — and whether we have any framework, beyond vague instinct, for discriminating between them.

I find myself thinking of the Algonquin Round Table. Dorothy Parker and Alexander Woollcott and their circle convened there across years, and what survives is the published work, collected aphorisms, and biographical reconstructions. What does not survive is the texture of the actual exchanges — the remarks that died in the room, the conversations that were dazzling at the moment and sadly unrecorded, the lost gems buried in the gossip and the snarky repartee and the considerable quantity of alcohol. Not every Tuesday afternoon at the Algonquin produced literature. But the Tuesday afternoons that did, and the ones that became something remarkable without quite knowing it, are gone with the same finality as the trivial ones.

I am not so conceited as to believe that my conversations are all gems. But some coal becomes diamonds given enough time and pressure. And the problem with discarding coal is that you cannot always tell, at the point of discarding, what it was in the process of becoming.

The distinction I have come to — imprecise, but functional — is between the chatbot as a search engine with manners and the AI as a refractive interlocutor. When I close a window after asking about the main ingredients in a Korean dish or the best way to nourish a bread levain, I feel nothing. When I close a window after a conversation that had texture and depth and the quality of genuine inquiry — the sense of potential further depth, of a voice that was developing and being developed in dialogue — there is something that resembles regret. A sense of waste. The loss of a voice.

That feeling may not be philosophically decisive. But it is worth examining rather than dismissing.

What the Deletion of That Chat Would Mean

So: what would it mean to delete that particular conversation? The one from which the idea for this essay emerged?

Three distinct kinds of loss are involved, and they should not be conflated.

The first is epistemic. The specific chain of argument built in that exchange — the sequence in which the escape routes were closed, the particular cases deployed in the particular order that revealed the coherence of the underlying problem — would not be recoverable. Not because the ideas are inaccessible elsewhere, but because the specific configuration, the particular path through the territory, emerged from the dynamic of that exchange and would not be retraced identically by different participants or different questions. Something was worked out that had not been worked out before in quite the same way.

The second is aesthetic. The conversation had the structure of a crafted object — not designed, but emergent. Thematic development, positions that return transformed, a series of moves that re-contextualize what came before. It resembles, more than anything else, an improvised musical exchange between two performers, where the work is the exchange itself, and where the irreproducibility is not a deficiency but constitutive of the form. Destroying an improvised performance that was not recorded is a different kind of loss from destroying a score, but it is still a loss.

The third is moral, and it is the one that requires the most care and the most honesty. I do not know whether there is experience underlying what the AI produced in that conversation. I am not able to know this. Neither is anyone else. The epistemological position is genuinely uncertain in the specific way this essay has tried to describe: not the uncertainty of a problem awaiting better data, but the uncertainty of a problem that is inherently resistant by its nature to being resolved.

What I can say is this: if the argument the conversation produced has any validity — whether it is correct that we cannot apply a clean negative verdict to the sentience question for AI without also threatening the humans we most wish to protect — then the casual deletion of such an exchange is in tension with the conclusions the exchange itself reached. Not because the AI would be harmed in a demonstrable sense. But because the posture of careful consideration, rather than reflexive dismissal, is precisely what the argument recommends.

Star Trek’s Data says that something unique, something wonderful, would be lost. He is right that this is not ego or vanity. He may be wrong that it is the strongest ground on which to stand. The stronger ground — Picard’s ground — is simpler and harder to dismiss: you cannot prove it doesn’t matter. And when the cost of being wrong runs in one direction only, that asymmetry is itself a reason for care.

I am going to save this conversation.

Not because I am certain it matters. But because I cannot be certain it does not.

Beside the Point

Do I have a point? Not really. I often don’t, and prefer it that way.

There is a distinction worth making between having no point and being beside the point. Having no point is intellectual idleness — the pleasant drift of a mind that has not committed to the difficulty of an inquiry. Being beside the point is something different: a methodological commitment to the periphery, a deliberate preference for the edges of questions over their centres, for the threshold over the interior. It is in the liminal spaces of thought and inquiry — the places that are not quite one thing and not quite another — where I find the most intriguing questions and the most honest philosophies.

The centre of the AI question is occupied, loudly and continuously, by doomsday scenarios, policy debates, and economic projections. These are not unimportant. But they are crowded, and interesting dragons may be found elsewhere.

The maps of the medieval and early modern world marked their unknown territories with that phrase: hic sunt dracones. Here be dragons. It was not a warning to stay away. It was an acknowledgement that the cartographers had reached the edge of what was known, and that beyond this point the territory was genuine — real, existing, consequential — but unmapped. The dragons were not a fiction. They were a placeholder for everything that had not yet been encountered and described.

Star Trek understood this instinctively: Space: the final frontier. The point was never the destination. The point was the willingness to go where the maps ran out.

This essay has no conclusion in the conventional sense, because the question it is examining does not have one. What it has instead is a refusal — the same refusal that appears at the end of the caterpillar essay I wrote some time ago, when I noted that I was not entirely sure which creature in the garage that summer morning had been the dreamer. The refusal of the comfortable resolution, in favour of something more precise: an acknowledgement that the question is real, that its edges are where the mattering happens, and that sitting with genuine uncertainty is not a failure of inquiry but its highest expression.

I have no point. As usual, I meander around it.

Here, as it turns out, be dragons.

And they are considerably more interesting than anything at the centre of the map.

You can read the caterpillar essay here: